Neural Networks From Scratch Part 1: Fundamentals

Asher Best • January 31, 2026

Introduction to Neural Networks and the Contents of this Blog Post

In this post, we’ll build a foundational understanding of how neural networks behave from perceptrons to backpropagation. In part 2, we will apply this understanding to build one from scratch in Python.

What Neural Networks Are and Why Neural Networks Matter

A neural network is a machine learning model that stacks simple “neurons” in layers and learns pattern-recognizing weights and biases from data to map inputs to outputs.1 Although there are several algorithms with each having its own specific applications, neural networks rank amongst the most popular algorithm in modern day machine learning and artificial intelligence (AI). Popular Large Language Models (LLMs) that power AI assistants and agents such as the GPT series from OpenAI, Gemini by Google, and Llama by Meta are built on neural network architecture. Neural networks also underpin many facial recognition, computer vision, and speech recognition AI systems.

Who This Post Is For

This post is for those of all skills levels. Whether you are a hobbyist, a novice programmer, or a technologist looking to brush up on your fundamentals, this post aims to communicate these concepts to a broader audience. Keep in mind, neural networks are extremely complex and it is almost impossible to cover every topic in depth in a blog post. I encourage you to deep dive further into these topics through the resources provided below if you want to take your knowledge to the next level.

Why I Wanted To Learn How to Build Neural Network from Scratch

I wanted to learn how to build a neural network from scratch for a few reasons. First, it sounded like a fun and challenging project to increase both my knowledge and skill set in computer science. Second, with the AI wave I wanted to be able to speak more to how these LLMs actually work under the hood rather than how they work on the surface. Lastly, I am fascinated by how the brain works and understanding how to simulate brain activity into computer language intrigues me.

The Basics of Neural Networks

Why These Basics Matter

Learning the basics of neural networks is of upmost importance before diving further into the mechanics and equations. The formulas and calculations do little for us if we cannot visualize how the values we give our neural network traverse the various components and connections to achieve the desired result we are ultimately working towards. Let’s breakdown the individual components of a neural network to start.

Perceptrons and their Link to NAND Gates

Although perceptrons are not the primary neuron model used in modern neural networks, understanding how they work will lay the foundation for learning modern neural network architecture. So what is a perceptron? Developed by the scientist Frank Rosenblatt in the 1950s and 1960s, a perceptron is a type of artificial neuron that seeks to mimic a biological neuron. It takes one or more binary inputs and produces a single binary output.2

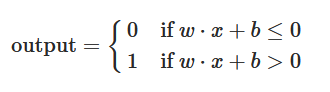

Each input is assigned and multiplied by a level of importance (known as a weight) and the sum of these multiplied values derive the output. If this output is greater than some pre-defined threshold, then the output is 1 (true) otherwise it is 0 (false). To simplify this expression further, let’s convert the threshold to what is known in machine learning as a bias, move it to the other side of the equation, and use dot product notation for our inputs and weights. If the dot product of the weights and inputs plus the bias is less than or equal to zero, the output is 0 otherwise it is 1.

You can start to imagine many perceptrons being connected to drive decisions and adjust the output depending on the size and complexity of the network. The perceptron connections might make it seem like there are multiple possible values (one value per arrow) between each perceptron, but in reality, each perceptron produces a single output (the arrows are simply visual candy, if you will). You can think of the arrows connecting the perceptrons as vectors on an (x, y) graph where the numerical values can be translated into matrices for dot product calculation. I highly suggest watching 3Blue1Brown’s tutorial playlist on linear algebra to learn more about matrices and vectors.3

How are NAND (NOT AND) gates related to the perceptron model? First of all, let’s talk about what an AND gate does first to understand its polar opposite. An AND gate takes two binary values as input and produces a single binary result based on both values being either true (1) or false (0). Figure 4 below displays the binary output taken from two binary values for both an AND gate and a NAND gate, respectively. As you can see, the result of the NAND gate is the exact opposite value of the AND gate!

A perceptron model network closely mimics NAND gate architecture. I will let you stop to appreciate the similarities through the images below. When you are ready, let’s move on to sigmoid neurons so we can take our network to the next level.

How Sigmoid Neurons Differ From Perceptrons

A sigmoid neuron is an enhancement to the perceptron by extending the range of potential values. The key difference between the two is that a sigmoid neuron can output any value between 0 and 1 whereas a perceptron only outputs either 0 or 1. To derive the value for a sigmoid neuron we use what is called a sigmoid function used as a non-linear activation function.4 This function wraps our previous formula for taking the dot product of the weights and inputs plus the bias and enforces a calculation of a value that falls between 0 and 1. Now that we have the basic components that comprise a neural network, let’s talk about the overall architecture.

The Architecture of Neural Networks

The architecture of neural networks comprises three different layer types: the input layer, one or more hidden layers, and the output layer. You can set the number of neurons within any given layer depending on what you are attempting to accomplish. More hidden layers typically provide for greater learning but may risk training complexity and overfitting (where a neural network performs poorly due to learning training data too well that it misclassifies and fails to generalize the true underlying patterns). However, the complexity of your network ultimately depends on your end goal. As we provide our network with training data, the network does what is called feed forward (or forward propagation). This is where the output from one layer is input into the next layer. This process is repeated through the network until reaching the final output. This is in direct contrast to backpropagation where we traverse backwards through the network from output to input, which we will cover in the next section.

Now that we have a base understanding of the building blocks of a neural network, our next question should be: how does it actually learn?

The Training Mechanics of Neural Networks

Gradient Descent and the Cost Function

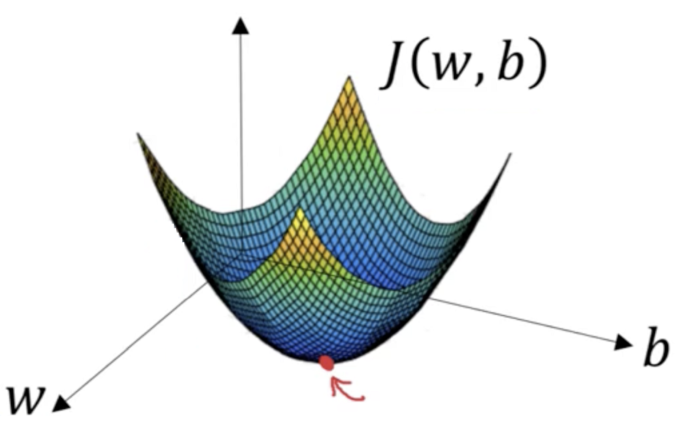

Imagine a ball rolling down a hill and the goal is to move the ball to the lowest possible valley. Within this landscape, we have the hilly terrain and the current position of the ball. We need to track which direction is uphill and has the steepest increase. Since the goal is move in the downward direction, we need to track which direction is uphill and use this value alongside steps the ball should take to gradually slide further and further down to the lowest point of the hilly terrain. This is the essence of gradient descent. Gradient descent is an optimization algorithm for finding a local minimum of a differentiable function and is used to find the values of a function’s parameters (coefficients) that minimize a cost function as much as possible.5 The cost/loss function measures the difference between the predicted and actual outputs (also known as the error of the model). The gradient is a vector which tells us the direction and rate of steepest increase of the function. Finally, the learning rate determines the size of the step taken downhill (the goal is find the optimal learning rate—not too big, not too small).

Speeding Up Learning with Stochastic Gradient Descent (SGD) and Mini-Batches

There are a few different types of gradient descent: batch gradient descent, stochastic gradient descent, and mini-batch gradient descent. Batch gradient descent calculates each sample’s error within a training dataset and gets updated after all training samples are finished. It is computationally efficient but can result in convergence (this is where the gradient is making very minuscule changes but doesn’t quite reach the optimal result). With stochastic gradient descent, parameters are updated for each training sample. This is more computationally expensive and could potentially produce noisy gradients. However, it is faster than batch gradient descent in most cases and improvement rates are more detailed. Finally, mini-batch gradient descent is combination between batch and stochastic gradient descent. It effectively chunks the training samples into batches and updates the parameters for each of the batches. This is the nice middle-ground between the two and is often the chosen method for neural networks.5

Backpropagating Through the Network

We briefly discussed forward propagation so let’s talk about its opposite: backward propagation (or backpropagation for short). The backpropagation procedure entails calculating the error between the predicted output and the actual target output while passing on information in reverse through the feedforward network, starting from the last layer and moving towards the first.6 Backpropagation utilizes the chain rule in calculus to calculate the partial derivatives of the weights in each layer (the gradient of the loss function for each weight). Through fine-tuning a neural network’s weights and biases, backpropagation can minimize the cost function by moving these parameters in the opposite direction of the gradient and towards the minimum. In essence, backpropagation is like checking your work on a math problem each step of the way and correcting earlier mistakes based on the final answer.

Summary

In this post, we covered quite a lot: perceptrons, NAND gates, sigmoid neurons, sigmoid functions, architecture/layers, gradient descent, types of a gradient descent, forward propagation, and backward propagation. I hope you found this post engaging and insightful. I sincerely appreciate you taking the time out of your busy schedule to read it. Stay tuned for part 2 where we’ll take everything we’ve learned and build a neural network that detects galaxies from scratch in Python!

References

1Lee, F. (2025, November 17). What is a neural network?. IBM. https://www.ibm.com/think/topics/neural-networks

2Nielsen, M. (2019, December). Neural Networks and Deep Learning. http://neuralnetworksanddeeplearning.com/

33Blue1Brown. (n.d.). Essence of Linear Algebra [Playlist]. YouTube. Retrieved January 31, 2026, from https://www.youtube.com/playlist?list=PLZHQObOWTQDPD3MizzM2xVFitgF8hE_ab

4Sigmoid Function. (2025, July 23). GeeksforGeeks. https://www.geeksforgeeks.org/machine-learning/derivative-of-the-sigmoid-function/

5Donges, N. (2024, August 1). Gradient Descent in Machine Learning: A Basic Introduction. Built In. https://builtin.com/data-science/gradient-descent

6Logunova, I. (2023, December 18). Backpropagation in Neural Networks. Serokell Software Development Company. https://serokell.io/blog/understanding-backpropagation

Glossary

Rather than reinvent the glossary wheel, see Google’s ML Glossary for a comprehensive glossary of machine learning terms.